Some Updates#

If you’ve been following the my Crypto Farm Series then you’ve seen lots of posts a few months back about building, troubleshooting, configuring and monitoring cryptocurrency mining rigs. It’s been a while since I’ve had a chance to post an update on how the farm has progressed, what my capacity has grown to, what I’ve been working on, and what kinds of lessons I’ve learned so I figured I would take a moment to update on some new advancements!

Spoiler alert: the farm has grown.

I have always planned on scaling my farms capacity so that I could post some content on solving some of the challenges that are unique to farm scale. Additionally, I wanted to build more tooling around the mining experience so that I could ultimately offer some new tools to other miners to help aid them in their mining efforts as well. There are many problems lots of people are all trying to solve in various ways, so I’m just looking for opportunities to take those problems, build solutions for them, test them, scale them, and ultimately make the experience that much more enjoyable for more people. It’s been a fun journey so far.

GPU Chip-set Wars#

In my earlier posts, I outlined some AMD GPUs that I’d been focusing on for my mining efforts. Specifically because - at the time - these were the most available, best value GPUs on the market. Of course, as many of these readers have discovered RX 480s aren’t manufactured any more and RX 580s and 570s are incredibly hard GPUs to find for a reasonable price now. Of course, most mining effective GPUs are hard to find at reasonable prices.

AMD vs NVIDIA#

So, I started my mining rig venture on AMD GPUs, specifically the AMD RX 480 chip-set. There was a pretty significant variance in hashing performance in all of my AMD RX GPUs from different manufacturers. Most of this is reasonable to expect, but the insane amount of variance was a bit surprising.

For instance, out of the box, my MSI Gaming RX 480s with 8GB of memory would only perform at roughly 22-23 MH/s without vBIOS modifications or overclocking. With modifications and overclocking I could consistently get these cards at roughly 28 MH/s but managing heat, power consumption and stability became real challenges. Additionally, there was a much higher manufacturing variance even within the same vendors implementation of the RX 480/580 chip-set design, moreover, I could get decent performance out of 570s for a lower per-card cost. All of this to say, I became disenchanted with AMD for mining ethash-based coins like Ether.

Enter NVIDIA…

Previously, I trusted a lot of the research I was doing before purchasing my first lots of GPUs that was leading me to this conclusion: NVIDIA is too expensive and doesn’t have enough of a performance edge to be a profitable card over AMD. Now, after months of mining with AMD and after testing the waters with some NVIDIA GTX 1070 rigs, I came to some completely different conclusions. The price of AMD cards had risen from the low $200s per card to the high $200s and even into the $300s, but of course, nothing else changed, they didn’t get better, just more expensive. NVIDIA cards also increased in price, but since they were already expensive cards, they didn’t increase at such a dramatic percentage and they were, in fact, better cards. The GTX 1070s that I run now consume 147 Watts per card running at full hashing capacity vs my AMD cards consuming 225+ Watts per card. Additionally, in the same environment my AMD cards can run 8-10˚C (47-50˚F) warmer than my GTX 1070s with stock cooling. This is in part due to the fact that the NVIDIA GTX 1070s that I’m running from EVGA have higher quality heatsinks than the stock MSI AMD RX cards. And finally, the NVIDIA cards are much more stable at over-clocked memory frequencies and consistently pull 31 MH/s comfortably without pushing the envelope and there has been zero variance from card-to-card so far.

Here is a summary of the above (all numbers are averaged performance over the 8 months and multiple vendors, vbios modifications, etc):

| Metric | AMD RX 480/470/580/570 | NVIDIA GTX 1070 |

|---|---|---|

| Reliable Hashrate | 25 MH/s | 31 MH/s |

| Power Consumption | 225 Watts | 147 Watts |

| Price | $250 | $410 |

| AVG Temp Delta | +10˚C | -5˚C |

| Raw power cost @ .1/kWh | $0.54/Day | $0.3528/Day |

| Additional cooling @ .1/kWh | $0.015/Day† | $0/Day |

| Revenue ETH‡ | $1.25/Day | $1.41/Day |

| Profit Margin (sans capitalization) | 55.60% | 74.98% |

| Profit Margin (w/ capitalization @ 18 mo) | 19.07%‡ | 21.87%‡ |

† This is an estimation of requiring roughly 300 Watts of additional cooling per 48 GPUs. ‡ Revenue is based on a $300 ETH price, 3 ETH block reward (no uncles or transaction fees considered), and a network hashrate of 110TH/s. It’s worth noting that of course fluctuations in any of these variables will impact these numbers. This estimation is designed to be conservative.

‡ AMD

(($0.54 + $0.015) * 547.5 days) + $250 cost = 553.8625 cost

$1.25 * 547.5 days = 684.375 revenue‡ NVIDIA

($0.3528 * 547.5 days) + $410 cost = 603.158 cost

$1.41 * 547.5 days = 771.975 revenueAs you can see in the above table, even considering conservative calculations using a single-coin mining configuration, the NVIDIA GTX 1070 8GBs from EVGA that I’ve been running for the past couple of months have been performing much better in every way.

Maintenance Costs#

So far, the main maintenance cost I’ve had to deal with is replacing GPU fans. I’ve been disappointed with the MSI Gaming RX series fans as they don’t seem to last but for a few months of running around the clock. Granted, I’m sure they weren’t designed for this kind of use. Additionally, while getting use to heat dissipation challenges, I typically would run them at higher RPMs (100%) for longer. The NVIDIA GPUs are running at much lower fan RPMs due to the fact that they consume less power and therefore produce less heat and the heatsinks put on my EVGA are higher quality. I don’t anticipate this issue as often with the EVGA cards.

Getting replacement OEM fans for GPUs doesn’t seem to be as trivial as I would hope. There don’t seem to be very many OEM fans available and in the rare case that you find some, they’re extremely expensive considering their performance. I’ve found that it’s actually cheaper to simply buy brand new, after market heatsink/fan combinations and get the added benefit of a higher quality heatsink.

Here is where I’ve become quite familiar with ARCTIC line of products:

This particular ARCTIC Accelero Twin Turbo II VGA Cooler is a really reasonable price especially compared to the similarly priced after-market GPU fans that don’t come with extra aux heatsinks as well as a main GPU heatsink. Now, of course, it’s not nearly as attractive looking as the stock coolers that come on the MSI Gaming Series, but at this point in the cards life, I’m starting to favor clean, efficient function over card fashion…

For the MSI Gaming RX series cards, it was very easy to replace the heatsink (keeping in mind that doing this voids the MSI warranty, which, at this point I feel is fairly voided anyway considering the workload I’m putting these cards through). The ARCTIC cooler also comes pre-thermal-pasted which makes installation that much faster and cleaner.

For more detailed information on how to replace card heatsinks, just let me know. If enough people seem interested, I’ll put some time into publishing some content on it.

Scaling with Initial State#

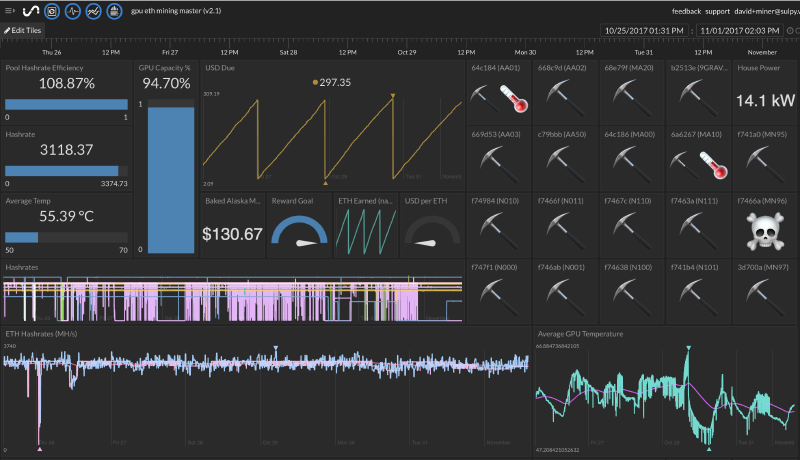

It’s been super easy to scale up the monitoring of my farm and progress using Initial State’s tools. Here is an updated look at my Dashboard:

I’ve been able to use this dashboard to keep a keen eye on each of my rigs states, power consumption, costs, trends, etc. Additionally, I’ve found my notifications have come in super handy. Originally, I had setup notifications each time I lost any bit of capacity, I started to realize the statistical likelihood of occasional drops in capacity that ultimately automatically recover would create a growing annoyance of notifications. However, since I have the advantage of having built Initial State, I also had a great opportunity to implement a new delta feature for triggering that allows me to setup triggers that only notify if the capacity changes by a certain magnitude indicating a much larger outage or trend.